Higher education institutions are racing to keep up with the ever-evolving student and workforce demands—and policymakers have no shortage of (ostensibly) good ideas to improve the sector. But are all these good ideas as potent or as effective as the hype—or hope—promises?

In three specific buzz-inducing cases, it’s worth taking a step back to evaluate the bigger picture before policymakers create legislation or institutions alter programs that may not produce desired outcomes.

Misconception: Free college will solve higher ed’s access problems.

Truth: Free college may score votes, but for most students, it doesn’t make college more accessible. Rethinking the traditional college business model could.

The hot solution to college affordability: make it free. My colleagues at the Christensen Institute have written fairly extensively on our views on “free college” proposals. Our diagnosis is that the key issue facing higher education is a problematic business model. Subsidizing access to a broken system doesn’t fix the system. Who should pay for education is an important policy question, but it can’t be used to cover up or avoid dealing with the issues of why college is so expensive in the first place. There are real problems in U.S. higher education, and “free college” is an easy sound bite that allows politicians to attract voters without explaining or solving those problems.

What can improve this situation? Rethinking higher ed’s broken traditional business model is one way, and Western Governors University (WGU) is a good example of how an innovative institution can scale a high-quality, low-cost model aimed to serve learners otherwise shut out of the traditional system. Recently, WGU graduated 100,000 students and has over 100,000 students currently enrolled. These milestones demonstrate that by rethinking how higher education operates and how it serves its students, a win-win is created for institutions and the students they serve.

Misconception: College may not be worth it anymore.

Truth: It depends. Factors like whether or not a student graduates or what she decides to major in matter.

Adjusted for inflation, the salaries of college graduates are more-or-less flat since the time that baby boomers first began crossing the commencement stage. The costs of college, on the other hand, continue to creep higher. This has led to tough consequences for the millennial generation—which is now more educated than prior generations, but struggling with debt and taking the slow road to household formation. At today’s cost and in today’s economy, the growing national sentiment seems to be that college may not be worth it anymore.

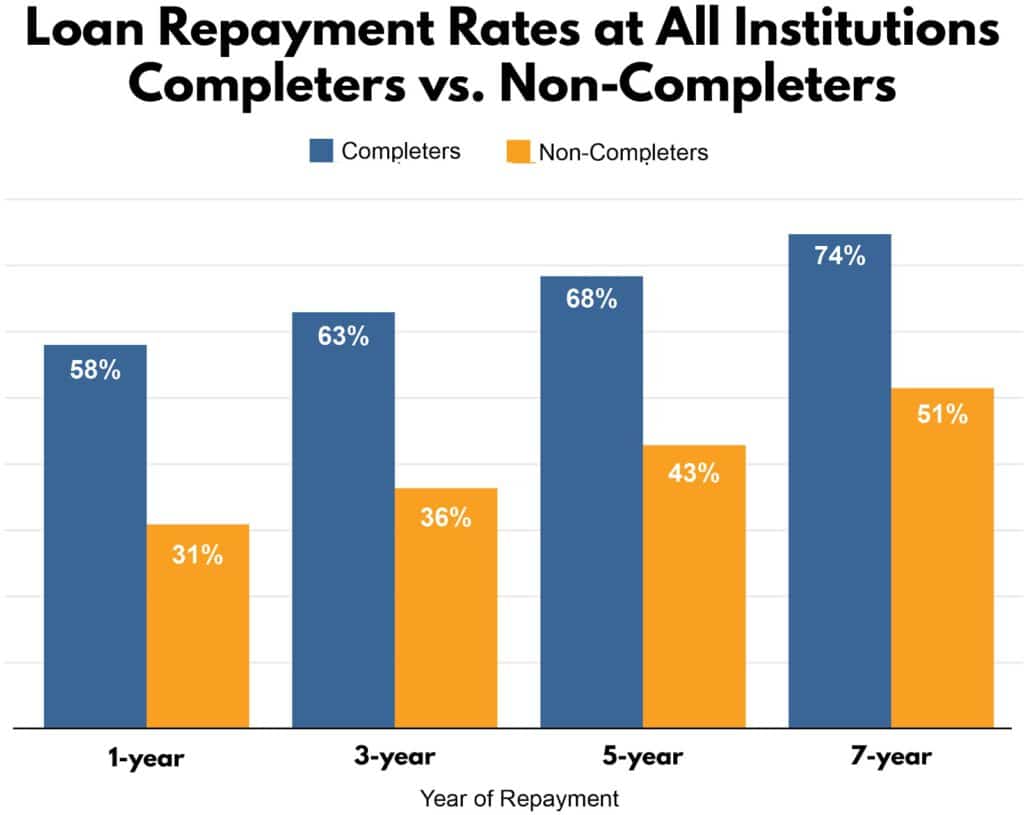

Whether or not college is worth it, however, depends on a number of factors. Factors include whether or not a student graduates from college and can, therefore, afford to pay back their student loans (though data also suggests it’s hard for both graduates and non-graduates to pay back student loans); what a student majors in and the potential payoff for specific careers; and whether or not a student treats their college education as an investment—meaning having a clear view of the desired degree, the requirements to get there, and a semester-by-semester plan of how to meet those requirements in order to save money and valuable time.

Misconception: Career changes are frequent, so re-skilling and up-skilling are critical.

Truth: Re-skilling and up-skilling are critical because the nature of jobs may be changing.

The economy is changing: workers are now expected to experience an average of seven career changes over their lives. Or are they? While the seven-careers narrative is ubiquitous, the data behind such statistics is scarce. Rather than painting a picture of a labor market zooming toward chaos and change, Bureau of Labor Statistics’ studies on job tenure are actually rather boring. In 2016, the median time an employee had worked for their current employer was 4.2 years. A decade ago, it was 4.0 years. And in 1996, it was 3.8 years. For the median employee, the numbers are ever-so-slowly trending toward greater job stability, rather than away from it.

Does this mean that the narrative about workers needing to re-skill in order to navigate an ever-more turbulent economy is all hype? Well, not exactly.

More plausible hypothesis centers not around turbulent job stability, but the changing nature of the job itself. The World Economic Forum’s Future of Jobs report notes that, “technological disruptions such as robotics and machine learning—rather than completely replacing existing occupations and job categories—are likely to substitute specific tasks previously carried out as part of these jobs, freeing workers up to focus on new tasks and leading to rapidly changing core skill sets in these occupations.”

In other words, the ground may be shifting under our feet, but in more subtle ways than the seven-career-changes-in-a-lifetime narrative leads us to expect. Lifelong learning is essential to stay in our jobs, not necessarily because we are changing jobs constantly. This also means that re-skilling isn’t just for people changing jobs—it’s essential for everyone.